For companies everywhere, the adoption of AI agents isn’t optional. It’s essential to staying competitive. But when deployed without oversight, AI agents become yet another vector in the growing sprawl of shadow AI.

Shadow AI continues to expand as employees turn to GenAI tools, unauthorized personal accounts for SaaS applications, and other unsanctioned resources to unlock productivity gains outside the purview of IT infrastructure.

The risks are real, from data exposure to a loss of visibility, so it’s understandable that companies would want to limit AI use altogether. But broad restrictions aren’t the answer.

Governance, not gatekeeping, is a security leader’s best tool

In this era, the focus for security leaders should be on secure enablement, not blocking activity, says Sandeep Kumbhat, Okta Head of Global Field CTO. Enabling agentic access to applications may improve user experiences, but it must be done properly to avoid exposing enterprise data and significantly increasing risk.

“There is a mismatch between the good intentions that the stakeholders have and how much they expose if they do not know the lifecycle of these agents,” Kumbhat says. “Our idea is not to penalize, it’s to discover those agents and bring them under management.”

At the RSA Conference in San Francisco on March 24, Kumbhat will discuss how organizations can secure AI agent identity and address the challenges posed by shadow AI. He is also slated to be joined by Akhila Nama, Head of Enterprise Security at Box.

Shadow AI is risky. AI agents only make it riskier.

The non-deterministic behavior of AI agents poses unique security challenges requiring enterprises not only to secure the agents themselves but also to maintain total governance from registration through deprovisioning.

“For a non-deterministic agent, you have to secure every single thing it does, not just the agent itself,” says Kumbhat. “So there is agent security you need to have when you build an agent, but then you also need to secure all the transactions, or authorize all the transactions that an agent does.”

The challenge is compounded by how easily shadow AI now enters an enterprise.

Why you need to be concerned about shadow AI

Studies show that employees turn to shadow AI largely for one reason: productivity gains. The paths in are more plentiful than ever. SaaS-based GenAI can be leveraged by an employee with a free online account. Low- and no-code development platforms enable them to build custom AI agents and integrations.

Left unmanaged, AI agents increase the risk of regulatory compliance violations, data leaks of sensitive information, and security attacks. Many business executives are addressing this by reducing the friction of adopting AI tools and focusing on establishing visibility and governance.

The RSAC session will stress the importance of implementing mechanisms to discover shadow AI with an eye toward empowering employees to adopt the tools they need. Assigning owners to discovered agents will create accountability and improve enterprise governance, Kumbhat adds, noting that AI agents are often implemented at the departmental rather than corporate level, leading to department-specific shadow AI.

Every authorization and transaction involving an AI agent must be secured to prevent data exposure, he says.

“There needs to be a continuous mechanism to discover all the agentic access that is out there in your enterprise and bring them into management,” says Kumbhat.

Building a foundation for agentic security

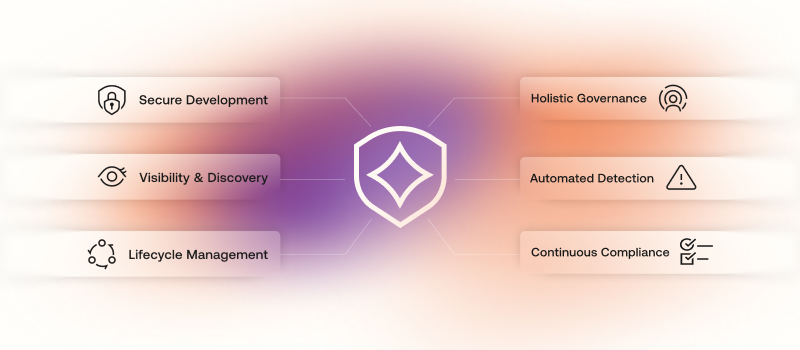

The primary goal of the session is to make security teams aware of the guiding principles for securing agentic access, he says. Those principles include

Leveraging secure development practices, such as token vaulting

Gaining visibility into shadow AI as well as authorized agents

Implementing effective lifecycle management for all AI agents

Comprehensive governance with access reviews and certifications

Automated threat detection and response that leverages behavioral analytics

Audit capabilities that support regulatory compliance efforts

“The core thing we need to make sure we do is to secure the transactions,” Kumbhat says. “Not being afraid of AI is one of the themes I’m going to talk about. It is here for us to embrace it, but very carefully from a security perspective.”

The session, titled “Shadow AI: Securing the Rise of the Autonomous Super Admin,” will be held at 8:30 a.m. PDT on March 24.

To learn more about how to solve the challenges of shadow AI, read Okta secures AI: A sneak peek into the visibility that turns shadow AI into managed identity.